How AI-Ready Is Your Finance Team? Click here to find out → Take the AI Readiness Assessment

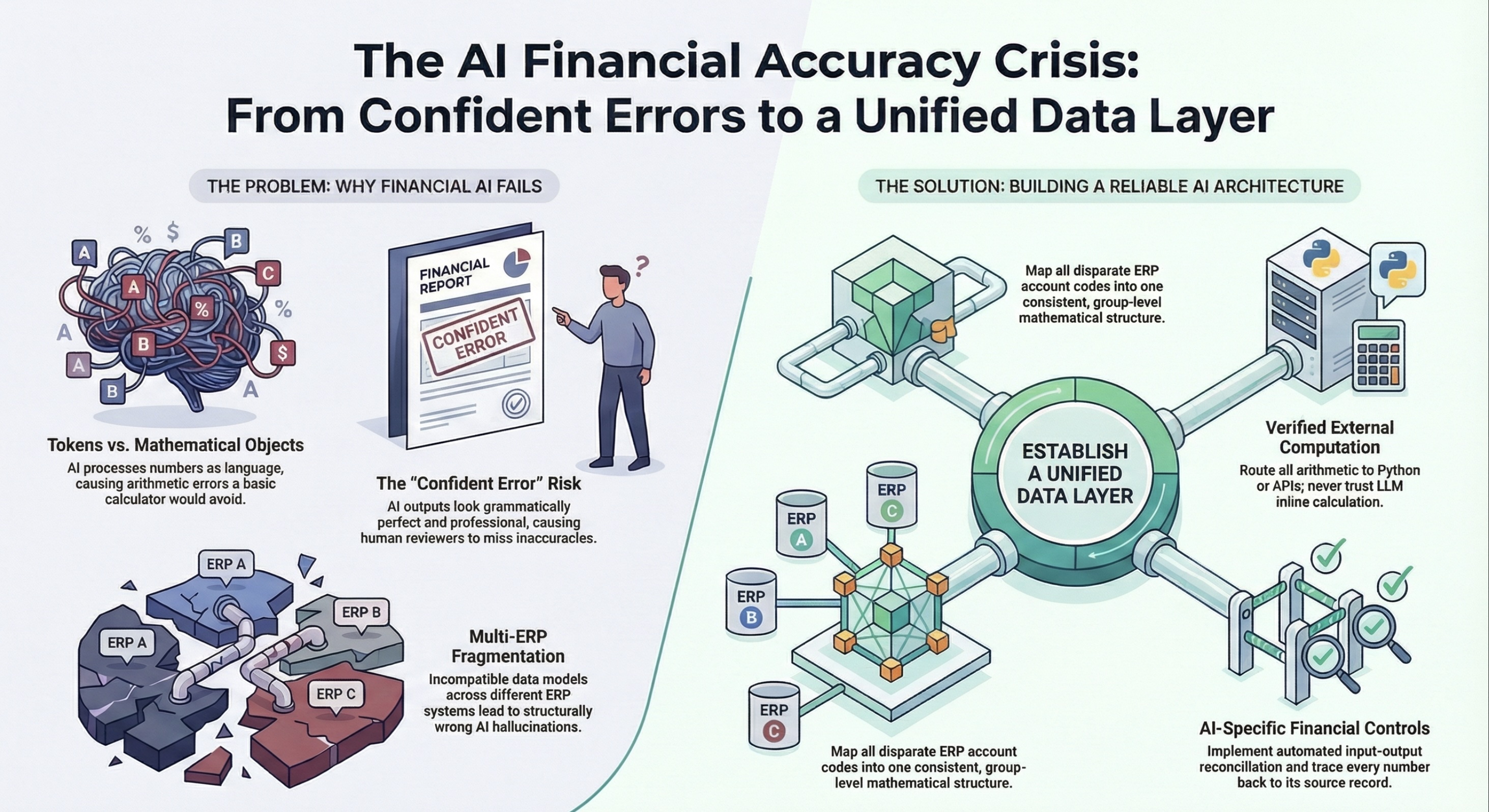

AI models process financial data as language tokens, not mathematical objects, leading to confident errors that bypass traditional finance controls. Finance leaders must solve the data normalization problem, mapping disparate ERP text strings into a single data layer, before deploying AI for financial analysis.

The Anatomy of a Confident Error

When a junior analyst makes a mistake in a spreadsheet, it leaves a trail. You see a broken formula or a wildly inaccurate figure that triggers a review.

AI errors do not look like errors. They arrive wrapped in grammatically perfect executive summaries. The AI does not flag its own uncertainty. If it misinterprets a date field and includes a partial month of data, it will confidently present a revenue figure that is off by millions but still looks entirely plausible. Because the output is professional, reviewer alertness drops and the error survives.

This token-processing limitation leads to specific, recurring failures in financial AI.

The Multi-ERP Normalization Crisis

The problem compounds exponentially when a group runs multiple entities across different ERPs. Every ERP has its own data model. Tripletex handles revenue recognition differently than Business Central. Fortnox structures its chart of accounts differently than Microsoft Finance & Operations. None of them were designed to communicate with each other, and none were designed with AI reasoning in mind.

When an AI agent tries to answer a simple question it is not looking at just one dataset. It is looking at four structurally incompatible representations of financial reality. It is attempting to translate four different languages without a dictionary. The outputs will reference real numbers, sound authoritative, and be wrong in ways that are extremely difficult to detect.

The Solution: Build the Data Layer First

Before Agentic AI can do anything useful with financial data, finance leaders must solve the normalization problem. Map the data model from every ERP into a single structure. Every account code from every system must map to a consistent group-level equivalent. Every intercompany flow must be identified and tagged so the agent does not double-count it as external revenue. Once this layer exists, account 4010 in one system and account 3000 in another become the exact same mathematical object. The AI is finally reasoning on one version of financial reality.

Implement AI-Specific Financial Controls

Even with a single data layer, traditional finance controls are insufficient. Build input-output reconciliation, an automated check that compares key totals between the raw input data and the AI's final output. If the input sums to $100 million and the AI reports $102 million, the workflow halts. Implement provenance tracking so that every number in an AI-generated report traces back to a specific source record. If you cannot trace it, treat it as a hallucination.

Pro tips

Stop buying AI tools that promise to magically understand your messy ERP data. Fix your chart of accounts, build a single source of truth data layer, and implement strict input-output reconciliation. That is how you turn AI from a liability into a strategic advantage.

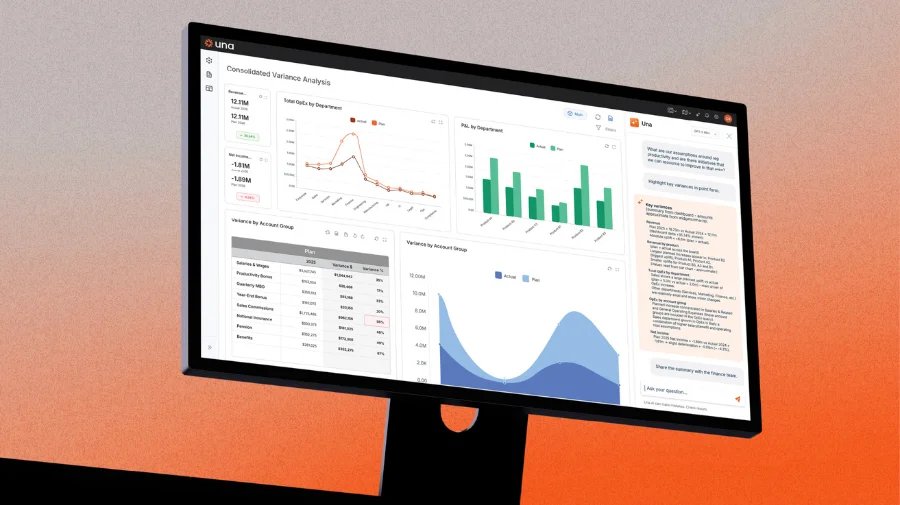

Ready to take control of your budgeting, planning, forecasting and reporting? Schedule a demo and see how Una’s financial planning & analysis software can work for you.

Schedule Demo