How AI-Ready Is Your Finance Team? Click here to find out → Take the AI Readiness Assessment

We've been doing deep research on which companies are genuinely winning at AI-enabled FP&A right now - not just buying software, but actually transforming how their finance teams operate. After synthesizing data from Gartner, McKinsey, Deloitte, KPMG, IBM, Workday, FP&A Trends, and a handful of enterprise case studies, a very consistent pattern emerged. Sharing the full breakdown here.

The companies winning AI FP&A aren't the ones with the fanciest models. They're the ones who fixed their operating model first.

Insights from Una.AI — AI-Powered FP&A & Performance Planning

📊 The Numbers First

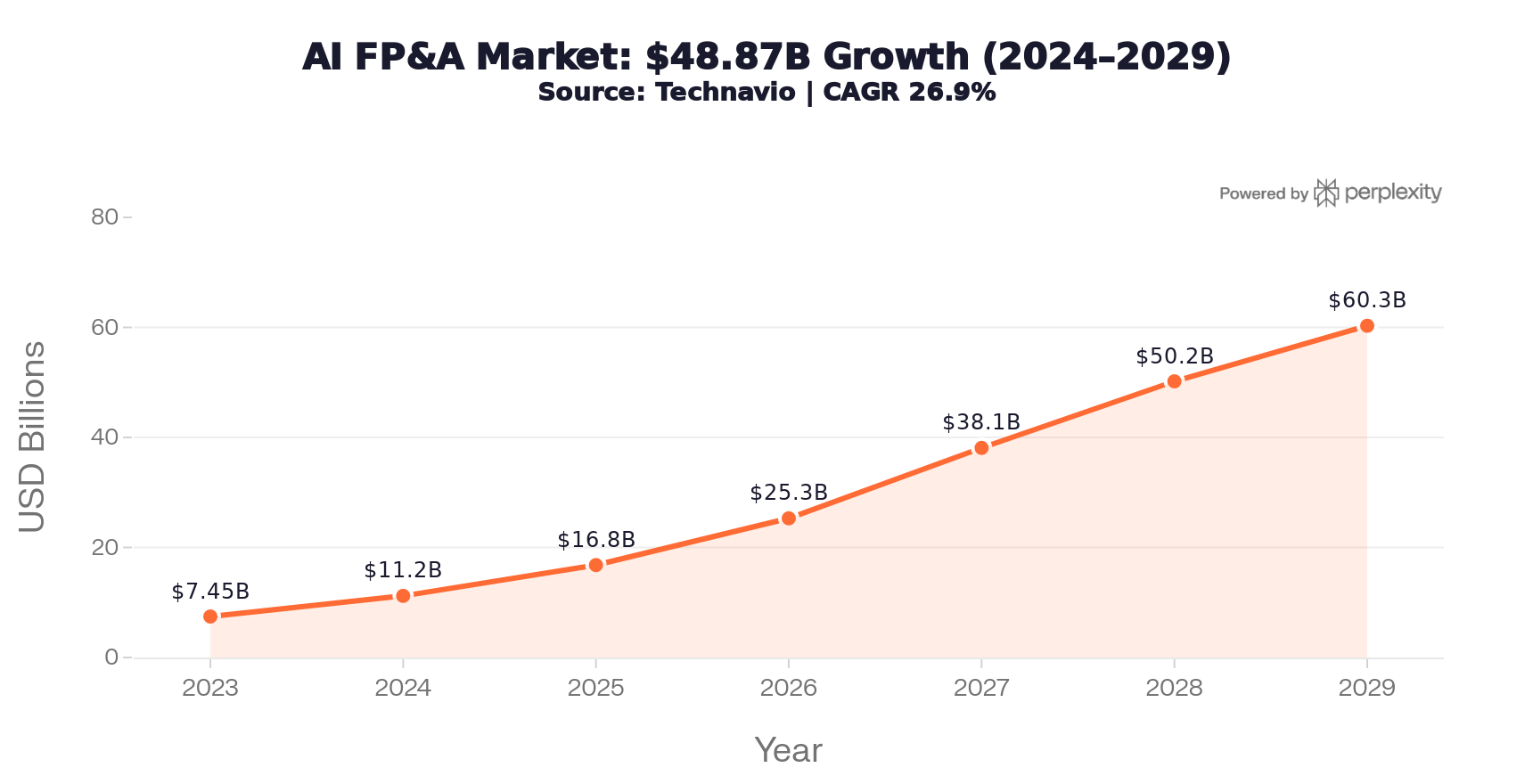

The AI in FP&A market is expected to grow by $48.87 billion at a 26.9% CAGR (Technavio). That's not hype - it's budget.

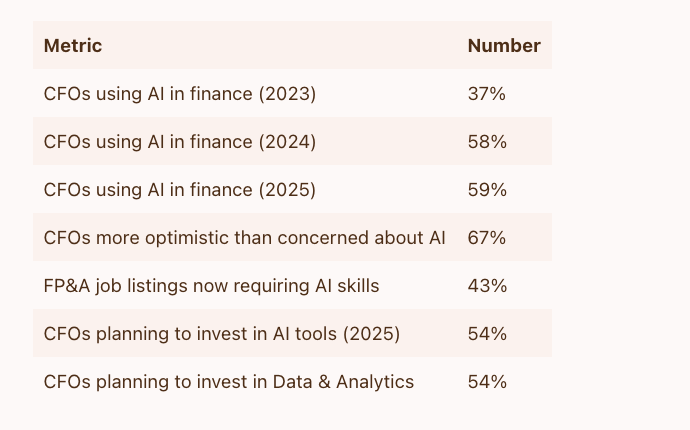

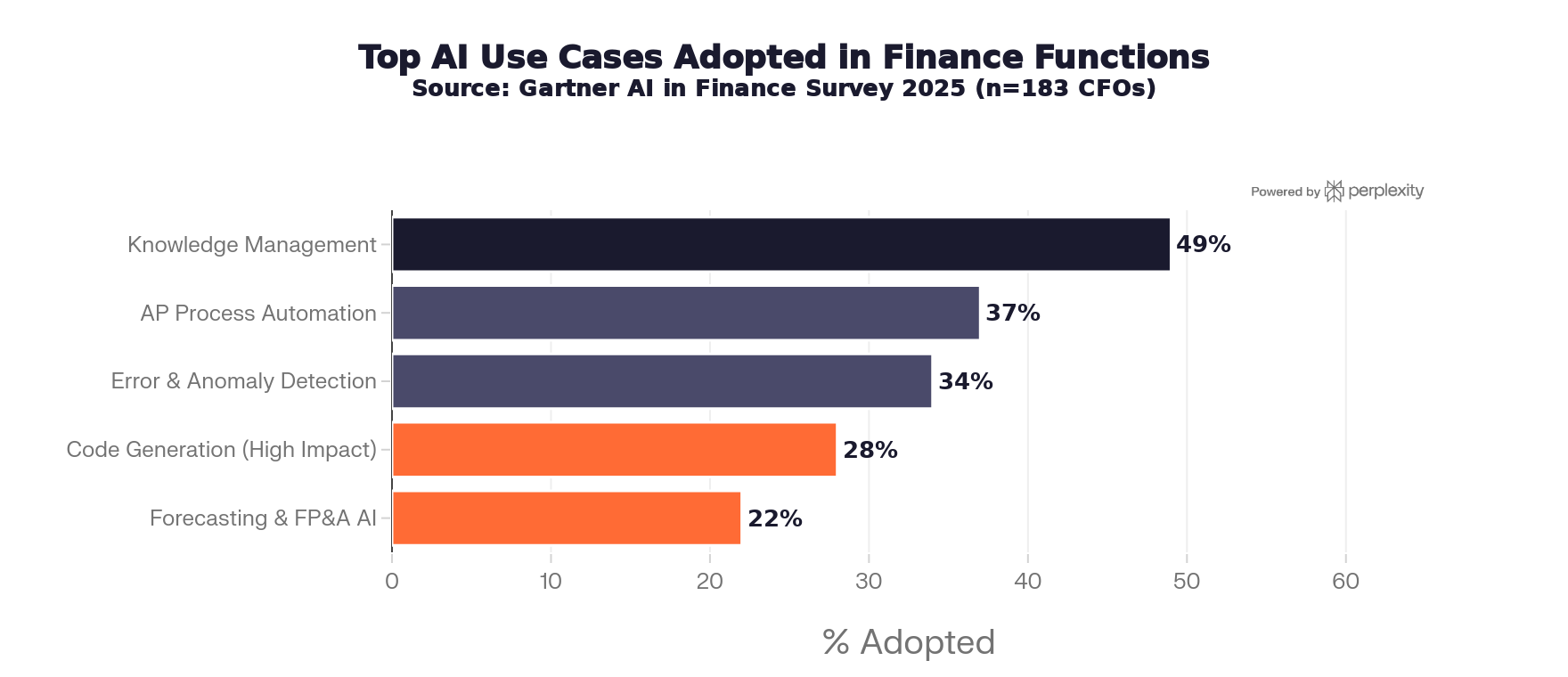

Here's where CFOs actually stand on AI right now (Gartner, 2025 survey):

The adoption plateau from 2024 to 2025 is interesting. Gartner noted that adoption "slowed" slightly, but CFO optimism jumped. What that likely means: the early hype-adopters hit walls (bad data, unclear governance), while more deliberate teams are now getting real results.

🏆 Which Companies Are Actually Winning?

The strongest examples of AI-enabled FP&A come from enterprises that treated it as an operating model problem, not a software problem.

Microsoft — Their AI-powered finance transformation (documented with EY) focuses on embedding AI into finance workflows end-to-end, not deploying point tools. They redesigned processes before layering in automation.

Unilever — Data unification was the centerpiece. Before rolling out AI-driven planning, they invested heavily in making sure their data model was coherent across regions and functions. The result: better scenario visibility and faster business decisioning.

IBM Planning Analytics customers — Consistent pattern: planning AI layered onto well-governed enterprise models. The AI didn't create the governance — teams had to build it first.

Workday / Oracle leaders — These platforms' best customers use AI for driver-based planning, anomaly detection, and narrative generation. The differentiator isn't the tool — it's how the finance team is structured around it.

The 5 Patterns That Separate the Best AI Finance Teams

After looking across all the case studies and practitioner research, five things show up consistently in the leading teams:

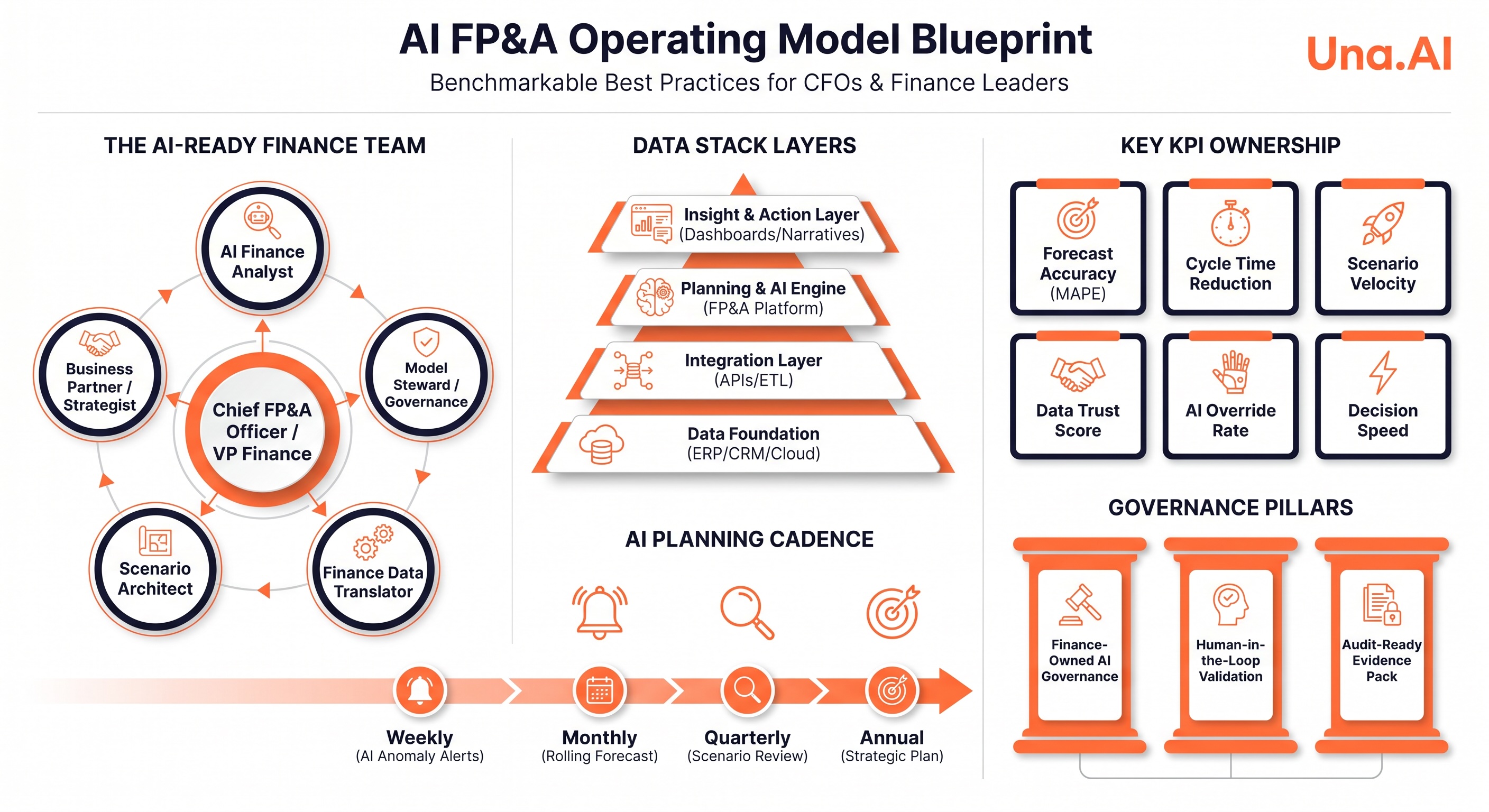

1. Hub-and-Spoke Team Structure

Top teams are moving away from centralized monolithic FP&A and toward a hub-and-spoke model:

New roles are appearing: AI Finance Analyst, Finance Data Translator, Scenario Architect, Model Steward, Insight/Narrative Lead. The traditional analyst role is shifting from spreadsheet assembly to exception management and decision framing.

2. Layered Data Stack (Not Monolithic)

The winning data architecture is a stack, not a single platform:

Finance leaders consistently say data quality and lineage matter more than adding advanced models early. The sequencing: data foundation → planning harmonization → AI augmentation → autonomous recommendations (only after controls are proven).

3. Finance-Owned Governance (Not IT-Owned)

This is probably the most underrated pattern. The best teams don't delegate AI governance to IT or to the vendor. Finance owns it.

What finance-owned governance actually means:

FP&A Trends and recent governance research are calling this the "accountable AI" model for CFOs: every important output must be explainable, reviewable, and linked to approved drivers or scenarios. Not just for compliance — for trust.

4. Continuous Planning Cadence

Winning teams blew up the annual budget calendar. They replaced it with:

CadenceActivityWeeklyAI anomaly detection, business alerts, variance flaggingMonthlyRolling forecast refresh, driver reviewQuarterlyFull scenario review, strategic assumptions updateAnnuallyStrategic plan refresh anchored in the same core model

The key insight: AI delivers disproportionate value when it shortens the time between signal detection and management action. The calendar-driven budgeting cycle was always the bottleneck - these teams removed it.

5. Clear KPI Ownership

Best teams define owners for a tight set of AI FP&A metrics. Critically, they measure two different things:

Platform health KPIs:

Business value KPIs:

If you only measure platform KPIs, you're measuring your tech. If you only measure business KPIs, you can't diagnose where things break. You need both.

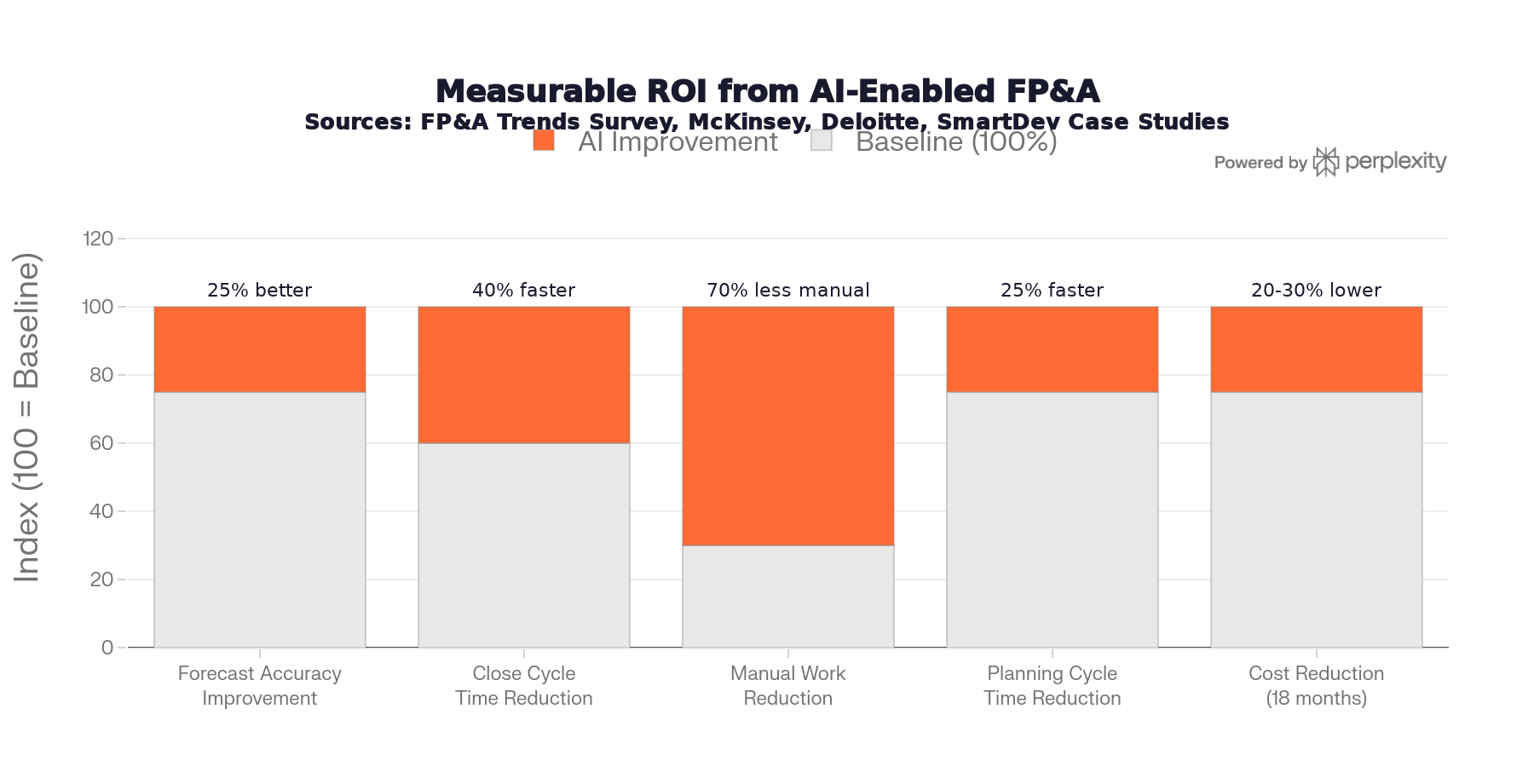

📈 What Good Looks Like: Benchmarks

Across the case studies and practitioner surveys reviewed, here's what leading teams are reporting:

Impact areaImprovementForecast accuracy~25% betterClose cycle time~40% fasterManual work reduction~70% lessPlanning cycle time~25% fasterCost reduction (18 months)20–30% lower

These aren't universal — they depend heavily on where a team starts. But the direction is consistent across sources.

The 3-Stage Maturity Model

A useful way to benchmark where your team sits:

Stage 1 — Reporting-Centric FP&A:

Low data quality, limited automation, static annual plan, analyst-heavy reporting. Most finance teams still live here.

Stage 2 — AI-Augmented FP&A:

Improved data governance, partial automation, rolling forecasts, AI-assisted analysis. A growing minority of teams.

Stage 3 — AI-Native FP&A:

High data quality, finance-owned AI governance, continuous planning, proactive decisioning, strategic business partnering. This is where the gap is.

The jump from Stage 1 to Stage 2 is mostly a technology problem. The jump from Stage 2 to Stage 3 is an operating model and culture problem.

The Full Operating Model Blueprint

Here's what the complete model looks like when it's working:

ComponentRecommended designOwnerOrg modelHub-and-spoke; central CoE + embedded business partnersCFO / VP FP&AData stackERP + CRM + ops data → governed integration → unified planning platformCFO + CIOAI controlsHuman-in-the-loop approvals, override logging, audit-ready recordsFinance governance leadCadenceWeekly anomalies → monthly rolling → quarterly scenarios → annual strategyFP&A leadershipKPI ownershipForecast accuracy, bias, cycle time, trust score, override rate, decision speedShared: FP&A + business finance + data ownersChange modelUpskill analysts, redesign workflows, start narrow, then scaleCFO + transformation lead

🤔 What Most Finance Teams Get Wrong

🏁 Bottom Line for CFOs

The question isn't "should we invest in AI FP&A?" — at 54% of CFOs planning to invest this year, that ship has sailed.

The real question is: "Are we building the operating model that makes AI work, or just buying software?"

The companies pulling ahead have all made the same bet: fix the data foundation, restructure the team, own the governance, kill the annual planning calendar. The AI tools are almost secondary to those four decisions.

This post is based on research synthesizing data from Gartner AI in Finance Survey (2025), McKinsey State of AI (2025), Technavio AI FP&A Market Report, FP&A Trends practitioner surveys, Finance Job Market Report (2026), Deloitte AI team structure research, KPMG Modern Finance Operating Models, IBM Planning Analytics case studies, EY/Microsoft finance transformation documentation, and FP&A Trends governance research (2026).

Una.AI is an AI-powered FP&A and Performance Planning platform built for the enterprise.